What AI Orchestration Actually Requires (And Why Most Platforms Only Go Halfway)

Ask any data team lead how their week is going and you will likely hear the same answer: buried. Not in analysis, not in decisions, but in the grunt work that precedes both. Pulling data from five different systems, reconciling mismatched schemas, rebuilding the same Excel file that broke when someone updated the source, and somehow still delivering a report by end of day. The tools were supposed to fix this. The dashboards, the data warehouses, the automation scripts, the AI assistants. And yet, for most enterprise data teams, the manual overhead has not meaningfully shrunk. If anything, the proliferation of tools has added coordination complexity on top of the original problem.

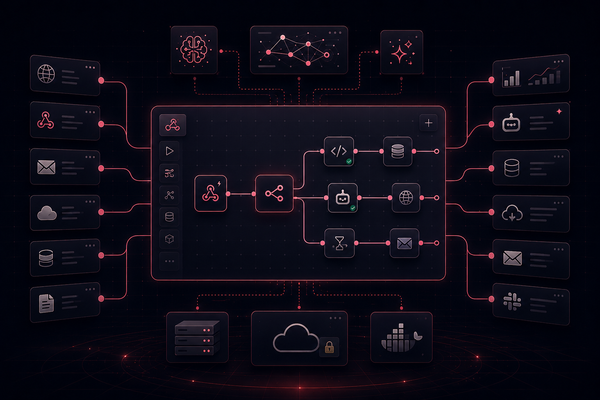

AI orchestration is the category that promises to finally close the gap. The idea is straightforward and genuinely compelling: rather than stitching together point solutions by hand, an orchestration layer coordinates every component of the data and analytics workflow automatically, from ingestion through transformation through output delivery. Agents handle the tasks, pipelines run on schedule, and analysts get to focus on what they were hired to do. That is the promise. The reality, for most organizations that have tried to act on it, is considerably messier. And the reason almost always comes back to the same root cause: the orchestration is shallow.

The Promise of AI Orchestration

At its core, AI orchestration is the discipline of coordinating multiple AI models, agents, and data pipelines into a unified, automated workflow that produces reliable outputs without constant human intervention. It is not a single product or a single architectural choice. It is a capability: the ability to take a complex, multi-step analytical task and execute it end to end with enterprise-grade consistency. When it works, it is genuinely transformative. A request that once required three analysts and two days of data preparation runs in minutes. A report that used to break every time a source system updated now runs on schedule, validates itself, and lands in inboxes before anyone has to ask for it.

The market has taken notice. According to Fortune Business Insights, the global AI orchestration market was valued at over $11 billion in 2025 and is projected to reach $60 billion by 2034 (Fortune Business Insights, AI Orchestration Market Size, 2026), driven by enterprises that are done tolerating the gap between their data infrastructure investments and the business value those investments actually deliver. Data leaders in finance, marketing, operations, and research are looking for platforms that can automate the full data lifecycle: not just surface insights, but collect, transform, model, and deliver them in the formats their teams already use. The appetite is real. The execution, for most vendors in the space, is not keeping pace with the ambition.

Why Most Approaches Fall Short

The failure modes in AI orchestration are well-documented, even if they are rarely acknowledged by vendors. The first and most common is orchestrating at the wrong layer. Many platforms coordinate AI models and agents effectively but treat data preparation as someone else's problem. They assume clean, structured, analysis-ready data will already exist by the time their orchestration logic kicks in. For most enterprise teams, that assumption is wrong. Data is still fragmented across Snowflake, Salesforce, Google Analytics, Campaign Manager, SharePoint, and a dozen other systems. Harmonizing it is not a preprocessing step that happens upstream. It is the work, and any orchestration platform that does not own it end to end is pushing the hardest part of the problem back onto the analyst.

The second failure mode is LLM-driven execution. Text-to-SQL tools and conversational analytics platforms have become a popular answer to the self-service analytics problem, and for straightforward queries against a clean data model, they can look impressive in a demo. But the accuracy ceiling on these tools is a practical barrier for production use. When overall accuracy sits in the 70-75% range, the team still has to validate every output before it goes to a client or a decision-maker. That validation work does not disappear. It just moves later in the process and becomes harder to catch. Beyond accuracy, LLM-driven execution lacks the auditability that regulated industries and high-stakes decisions require. When an output is wrong, there is no clear trail showing which step introduced the error, which makes fixing it as much art as science.

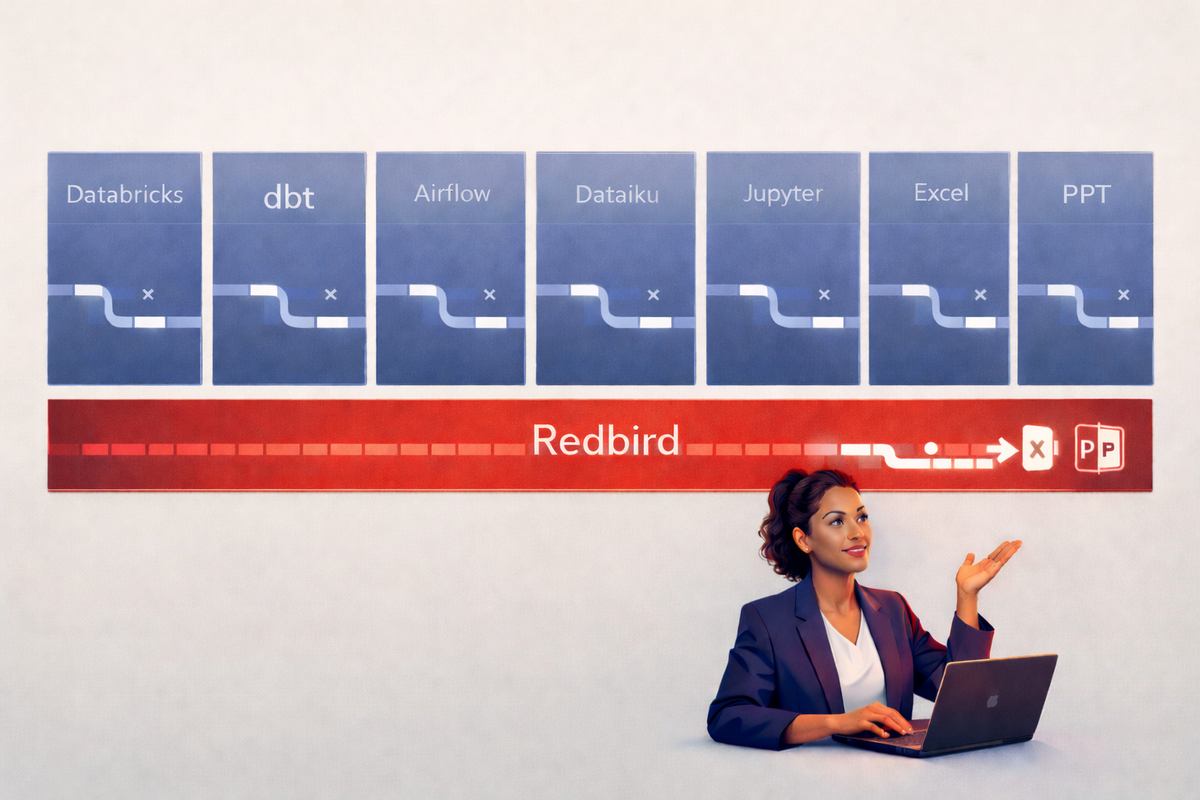

The third failure mode is scope. General-purpose AI assistants like ChatGPT, and even more targeted tools like Microsoft Copilot, operate within their own ecosystems or on public data. They cannot reach into an enterprise data warehouse, apply proprietary business logic, and deliver a formatted Excel report to a distribution list. Teams that have invested in tools like dbt, Jupyter Notebooks, Databricks, Dataiku, or Airflow know this well. Each of those tools solves a real problem within its domain. But stitching them together into a coherent, automated workflow requires constant engineering investment, and the result is fragile: a pipeline that works until a schema changes or an API breaks, and then requires a skilled data engineer to diagnose and repair. That brittleness is not an acceptable baseline for a team trying to operate at speed.

What AI Orchestration Actually Requires

Evaluating AI orchestration platforms is considerably easier once you have a clear set of requirements that reflect how enterprise data workflows actually work rather than how they are idealized in vendor documentation. The first requirement is genuine end-to-end coverage. A platform that handles transformation and analytics but requires a separate tool for data ingestion is not an orchestration platform. It is a middle layer. True orchestration means owning the entire lifecycle: connecting to the source, pulling the data reliably, transforming and harmonizing it, applying business logic, running analytical or data science models, and delivering the output in the format the business actually uses. Production-ready deliverables include formatted Excel files, populated PowerPoint presentations, and Word documents, not just dashboards. If any step in that chain falls outside the platform, the orchestration is incomplete.

The second requirement is deterministic execution. LLMs are valuable for interpreting intent and routing requests, but they are not reliable execution engines for complex, multi-step analytical workflows. An orchestration platform built for production use needs to translate natural language requests into explicit, auditable, step-by-step operations that run the same way every time. This is not an architectural preference; it is a practical requirement for any organization that needs to explain its outputs to a client, a regulator, or a senior stakeholder. If you cannot trace every transformation back to its source, the platform is not ready for enterprise use.

The third requirement is accessibility for non-technical users without sacrificing power for technical ones. The orchestration problem exists precisely because the people who need data insights are not the same people who build data pipelines. A platform that requires SQL proficiency to use, or that exposes its value only to engineers, does not solve the self-service analytics problem. It moves the bottleneck. At the same time, a platform that is genuinely useful for analysts and operations teams also needs to accommodate the SQL developers, Python practitioners, and data scientists who want to build complex pipelines and integrate custom models. One platform serving the full spectrum of users, under a single governance layer, is what enterprise AI orchestration actually looks like when it is working.

What This Looks Like in Practice

Consider a marketing analytics team at a large enterprise. Every Monday, they produce a performance report that spans paid media spend from Google Ads and Facebook, organic traffic from Google Analytics, CRM data from Salesforce, and campaign attribution from Campaign Manager. Historically, this has meant four separate exports, a morning of VLOOKUP and reconciliation work in Excel, manual recalculation of the team's internal performance metrics, and then another hour formatting the output into the PowerPoint template the client expects. For most teams, this process consumes the first day and a half of the week. The analysis itself takes thirty minutes. The rest is preparation.

With properly implemented AI orchestration, that workflow becomes a scheduled pipeline. A user describes the report they need in plain language or configures it once through a visual builder. The platform connects to each source, pulls the relevant data, applies the team's proprietary attribution logic, validates the output against defined quality thresholds, and delivers a formatted, presentation-ready PowerPoint to the distribution list, on schedule, without anyone pressing a button. When the data is clean and the pipeline is running, the analyst's Monday looks completely different. When something unexpected surfaces in the data, the anomaly detection layer flags it before the report goes out rather than after the client finds it. The report that used to consume a day and a half is now a review, not a production task.

This is not a theoretical scenario. It is the operating reality for data teams at organizations that have implemented orchestration at the right depth. The shift is not incremental. Research consistently shows that 60-80% of data team time at most organizations is consumed by manual data preparation rather than analysis. When orchestration genuinely automates that preparation layer, the capacity released is significant enough to change what teams can accomplish in a week, not just how fast they complete the same tasks.

How Redbird Approaches AI Orchestration

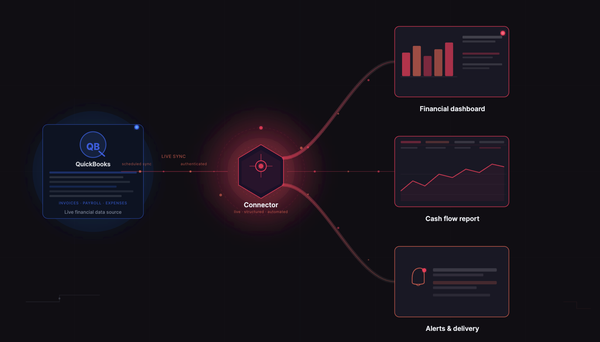

Redbird is an agentic data platform built to automate the full data lifecycle: ingestion, transformation, analytics, data science, and output delivery through a single, unified environment. The architecture is designed around the principle that LLMs should interpret intent, but should not execute complex analytical workflows. In practice, LLMs account for roughly 10% of what Redbird does: they take a natural language request and route it to the appropriate specialist agents. Everything else runs through a deterministic orchestration layer that translates those routing decisions into explicit, auditable, step-by-step operations. This design is what makes Redbird appropriate for production use in demanding enterprise environments across financial services, media, consumer goods, and technology, where accuracy, auditability, and governance are non-negotiable.

The agent ecosystem covers every layer of the data lifecycle. A Routing Agent decomposes incoming requests and dispatches them to the right specialists. Data Collection and SQL Agents pull from configured sources, including cloud warehouses like Snowflake and Databricks, enterprise systems like SAP and Oracle, SaaS platforms, and legacy systems where no API exists through robotic process automation. A Data Engineering Agent handles schema normalization, deduplication, and multi-source joins. An Analyst Agent applies custom business logic and KPI definitions. A Data Science Agent executes forecasting, anomaly detection, and classification workflows. A Reporting Agent assembles and formats the final deliverable using existing templates and standards. Each agent is purpose-built for its function. None of them are general-purpose models being asked to improvise.

What makes the accuracy reliable over time is the Context Management System. Before any workflow runs, Redbird ingests an organization's data ontologies, business rules, metric definitions, and output templates. It auto-generates metadata across the data warehouse and continuously refines its understanding of how the organization works as usage grows. The platform does not apply generic analytical logic to proprietary data. It applies the organization's logic to the organization's data, which is what enterprise-grade accuracy actually requires. This context layer is also what allows non-technical users to request complex outputs in plain language and receive results that are aligned with their organization's standards, not just technically correct in the abstract.

Redbird's client base spans financial services, media, technology, consumer goods, energy, and retail, including some of the most data-intensive organizations in the Fortune 50. The platform has grown its customer base sevenfold since 2022. These are not organizations with simple data environments or forgiving accuracy requirements. They are organizations with large, distributed data teams, complex multi-source workflows, and real consequences when outputs are wrong. The fact that Redbird operates at scale across this client base is a more meaningful proof point than any benchmark, because it reflects what enterprise AI orchestration actually demands in production rather than in a controlled evaluation environment.

The Bottom Line

AI orchestration is not primarily an AI problem. It is a data problem with an AI solution. Platforms that invest heavily in agent coordination and conversational interfaces while treating data ingestion, transformation, and output delivery as secondary concerns will consistently underdeliver, because the work that consumes most of an analyst's week happens before any model or agent ever gets involved. Solving for orchestration at the right depth means owning the entire pipeline: from the source system to the formatted deliverable, with deterministic execution and full auditability at every step in between.

Teams that get this right do not just move faster. They fundamentally change what their analysts are capable of. When the 60-80% of time that used to go to manual data preparation is recovered, it does not just flow back into the same tasks. It opens up the space for the analysis, modeling, and decision support work that data teams were built to do. That is what properly implemented AI orchestration actually delivers, and it is the standard worth holding any platform to.